Before using the robot mower, I always power the blade motors on in my garage for a few minutes to make sure everything is working right. I pulled our vehicles out of the garage, told the missus to expect some loud noises, and made sure that Mr. Mower Jr. was safe inside the house.

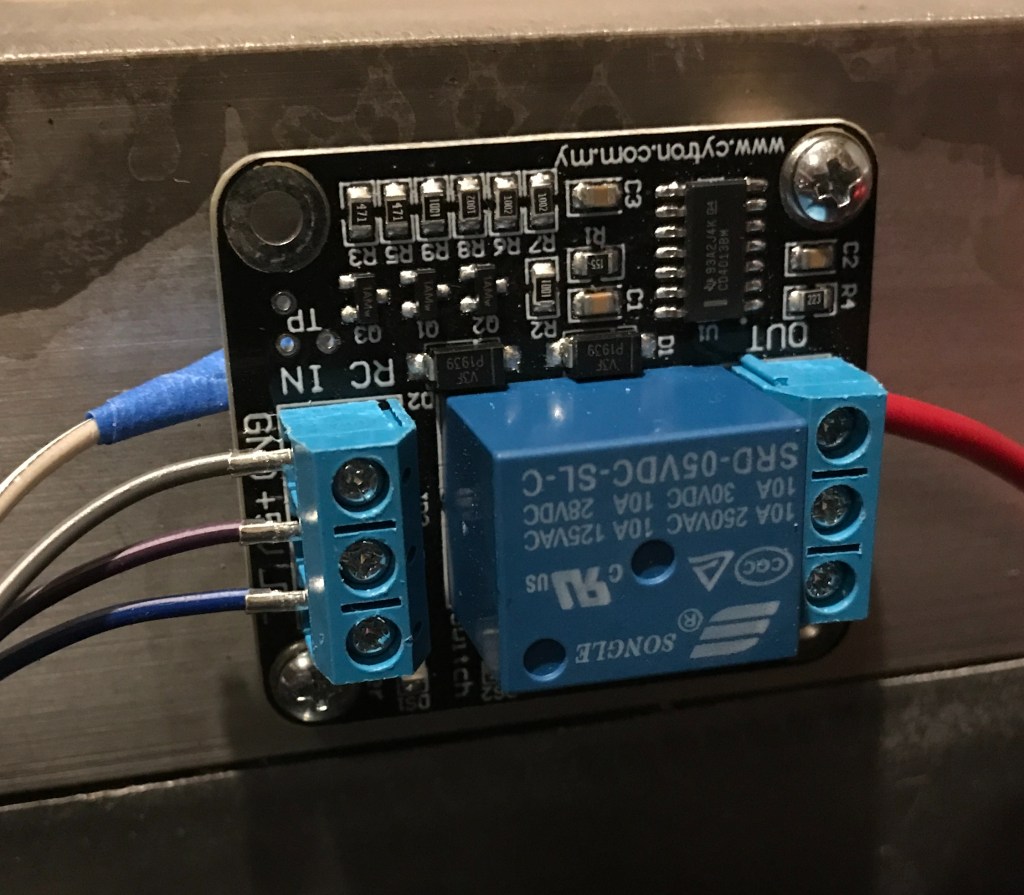

The nice thing about the new motor controller I’m using on the blade motors is that I can vary the rotation speed of the blades. So instead of commanding full throttle from the get-go like I had been doing with a relay, I decided to slowly ramp up the speed. It’s pretty incredible listening to the crescendo of the blades as they spool up.

About halfway to full throttle, I started to get a ridiculous amount of vibration. It was extraordinarily loud. The vibration was so intense that it peeled open the eye of one of my deck support eyebolts.

At first, I thought this was just a fluke, or that perhaps the eyebolt was already close to failure, so I replaced it with a spare and repeated the process. I’m not very smart. The result was identical.

When I was in engineering school, I had a choice to take Design of Heat Exchangers or Vibrations and Acoustics for technical electives. As you can probably guess by looking at my mower deck design, I chose Design of Heat Exchangers. It was a fun class, but pretty useless for anything mower related.

So to work through this issue, I’ve had to do some homework. Lucky for me, I managed to stumble upon a copy of Timoshenko’s Vibration Problems in Engineering at the Goodwill a few years ago, which has proven a valuable resource in my vibrations education.

Timoshenko says it better than I can, so I will quote him directly:

By an impulse or sudden application and removal of an external force, vibrations of the [spring mass] system can be produced. Such vibrations are maintained by the elastic force in the spring alone and are called free or natural vibrations.

Vibration Problems in Engineering, page 1

The mower deck is a kind of spring mass system, though geometrically more complex. There is a frequency at which it will vibrate if you were hit it with a hammer, just like a tuning fork will vibrate at 440Hz if you strike it against a hard surface. That unique frequency is called the system’s natural frequency, or frequency of free vibration.

Unfortunately, we’re not just hitting the mower deck with a hammer once. We’re hitting it with a hammer 4800 times per second. That oscillating force does not allow the mower deck to vibrate freely. That’s not free vibration. It’s forced vibration.

In the case of the mower deck, the frequency of that forced vibration is whatever speed the motors are spinning at. Because the motors go from zero to 4800RPM, the mower deck experiences vibration at every frequency along the way. Chances are good that the motors will create forced vibration at the natural frequency of the mower deck at some point as they spool up to operating speed.

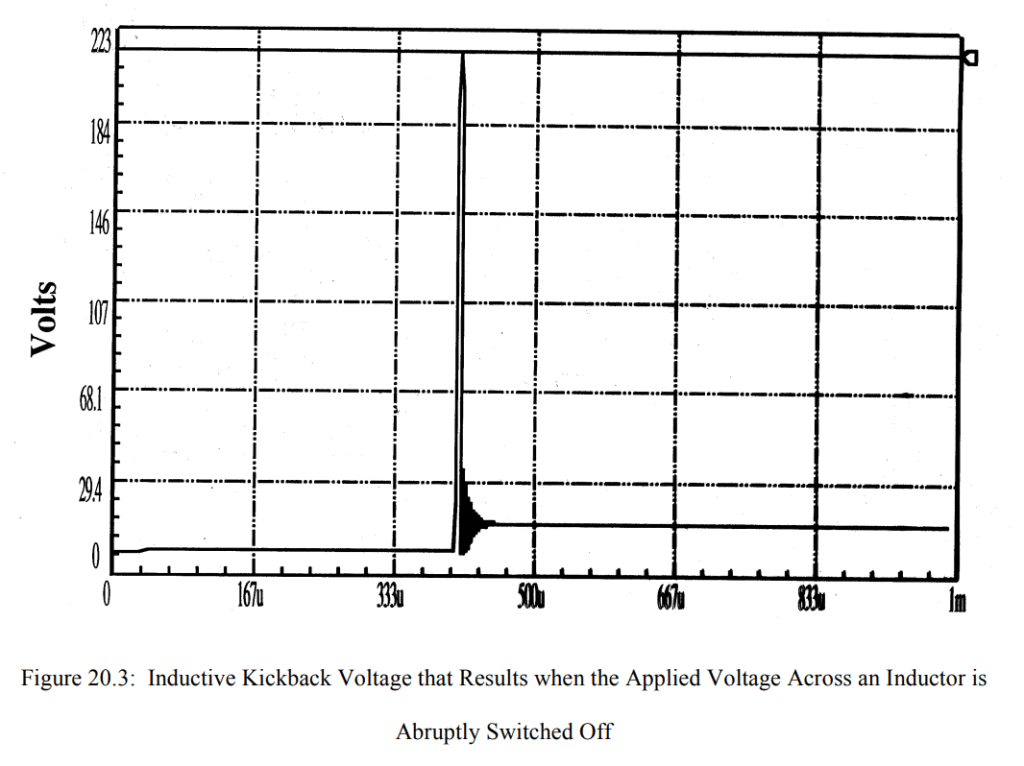

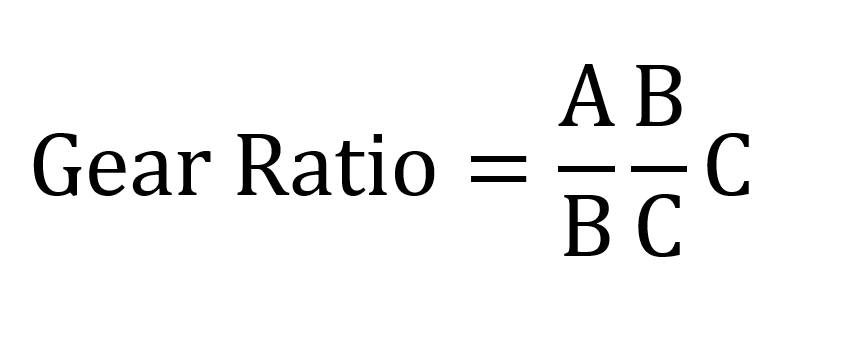

A very curious thing happens when you force a structure to vibrate at it’s natural frequency. You get resonance. And the amplitude of vibration becomes very large at resonance. Absent damping it will become infinite. Timoshenko includes a chart that illustrates this phenomenon very well.

When I was spooling up the mower deck motors, I consciously decided to do it slowly to minimize electrical current spikes. This created a problem that didn’t previously exist with the relay, where we rapidly turned on the motors. Timoshenko explains:

The amplitude of vibration [at resonance] increases indefinitely with time… This shows that, while we theoretically obtain infinite amplitude of forced vibration at resonance in the absence of damping, it also takes time to build up these large amplitudes.

Vibration Problems in Engineering, page 48

In other words, if we maintain forced vibration at the natural frequency of a structure, the amplitude of vibration will increase continuously to the extent allowed by any damping present in the system.

By slowly increasing the speed of the motors, I was allowing the mower deck to experience resonance and the accompanying large vibration amplitudes for a period of time long enough to damage the turnbuckle eyes. Who’d have thought?

Something else I noticed was that I had trouble getting the motors to speed up once they were at speeds close to the mower deck’s natural frequency, when it started vibrating really bad. Timoshenko has something interesting to say about that, too:

Experiments show that if any vibrating system is once allowed to reach a steady state just below resonance, it then becomes difficult to accelerate the machine through the resonance condition. Additional power supplied for this purpose is simply used up in increasing the amplitude of vibration rather than the running speed of the machine.

Vibration Problems in Engineering, page 48

I think I have a good grip on what the problem is. Next post I’ll discuss some things that can be done to mitigate this surprising issue.