The lack of activity here on MowerProject.com hasn’t been due to a lack of autonomous lawn mower development. This June we were blessed with a baby boy, and he has been taking up a lot of our time! Posting to the blog in the meantime has been difficult.

Juggling my 8 to 5, the mower project, and making time for Mr. Mower Jr. has been quite challenging. I owe a special thanks to my wonderful wife for letting me run errands for the project and work on it in the evenings. She is truly something special! Having her to bounce ideas off of and to encourage me along the way has been invaluable. I love you so much Mrs. Mower!

As I’ve done in the past, I find it’s good to set goals. Even if I don’t achieve them, they focus my mind and give me a sense of accomplishment and purpose as I work toward them. Below are some goals I have for the next few months.

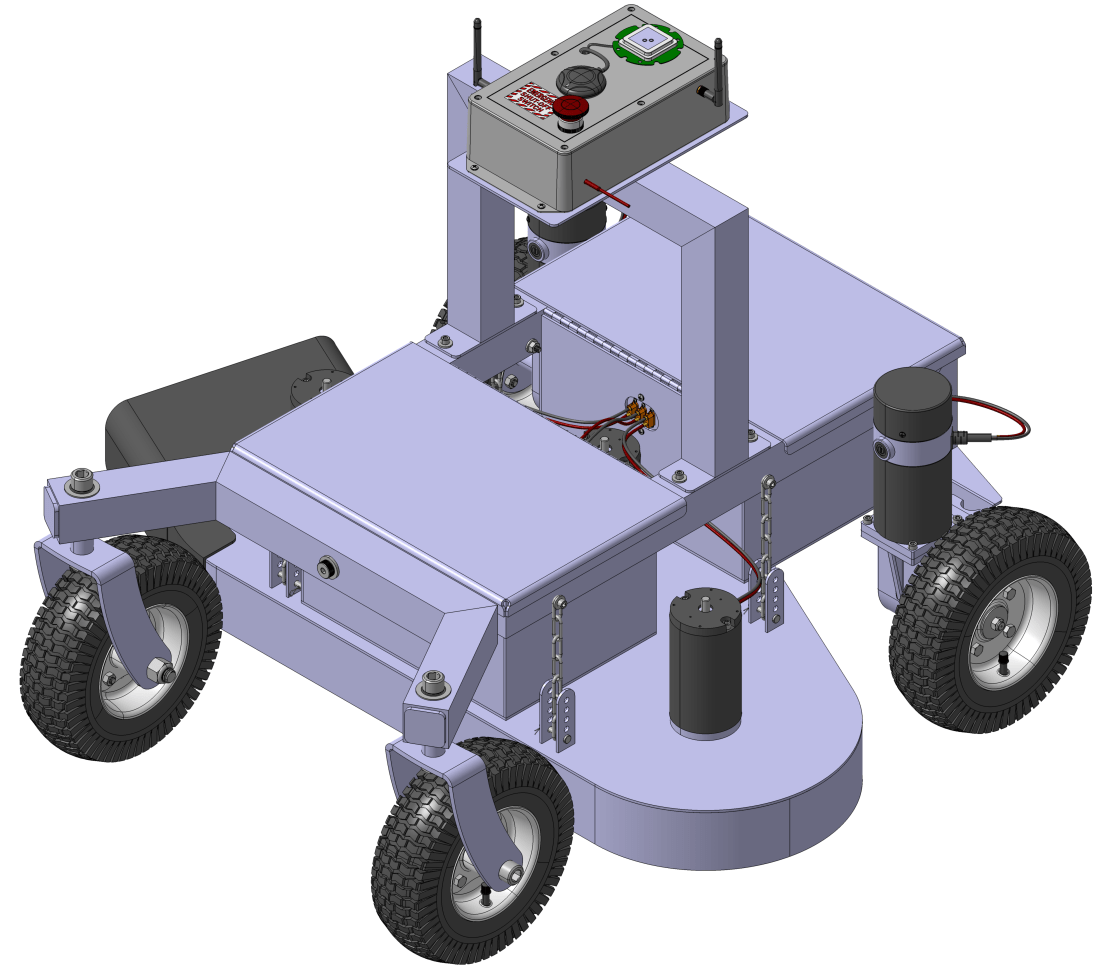

Finish Procurement and Begin Assembly

I have all of the parts to build the prototype robot lawn mower except for two more SLA batteries and new enclosures for the electronics. The SLA batteries are so cheap that I think it’s worth a gamble on them instead of ponying up the $1,000+ I’d need for some good quality LiFePo4’s.

Once those items arrive I will start to wire up the enclosures and get the subassemblies put together. I will probably need to make another wiring diagram showing how everything connects and functions before I get too far along.

Support Fabrication of the Prototype Weldments

Originally I had planned to fabricate a lot of the robot lawn mower components myself. The idea was that it would be cheaper to purchase my own tools and make the parts myself. Unfortunately, that plan requires a lot of time, a resource I’m very much short on these days with Mr. Mower Jr. in the picture. The economics of paying someone to make the parts suddenly looks attractive again.

To this end I’ve found a local gentleman who is helping me make the weldments. I find myself over at his shop every week or so answering questions about the design, and working with him has been very productive. His feedback has been quite helpful in helping simplify and refine the design so that it is easy to fabricate.

Functional Testing

I have a litany of questions I need to get answered about the performance of the prototype before we even cut grass. A few questions I need to get answered:

- How long can the mower run on four 12V, 35Ah SLA batteries?

- How well does the prototype handle pivot turns?

- How much current does the entire robot draw at typical operation?

- We need to verify emergency stop and safety features function correctly.

- How much air flow does the current mower deck and blade design create?

Once I’m satisfied the design adequately answers these questions, we can start cutting grass. Stay tuned for some pictures of the fabrication so far!